Jakob Schreiber, Shu Ching Chon

Klangklotz-Studie

Virtual Reality Performance

This study explores various ways of arranging sounds temporally and spatially in a virtual environment. The performer dives into a virtual world with help of a head-mounted display. The audience can follow her gaze on a projection. She can arrange virtual cubes that represent sounds freely in space. By drawing closed paths with a controller, the cubes can be arranged temporally and be connected to loops. The focus of the performance on January 17, 2020 at the IMWI’s „institute evening“ was the creation of a sound sculpture. Most sounds originated from field recordings and were edited beforehand.

Developed in the context of the course Creative Coding: Virtual Reality.

Supervised by Patrick Borgeat.

The project was awarded the NeuRIPS-Award for Outstanding Demonstration at the 33rd Conference on Neural Information Processing Systems (NeuRIPS 2019) in Vancouver.

As an artistic development endeavour it was supported with a grant from State Graduate Promotion Act (LGFG).

Vincent Hermann

Immersions

How does music sound to artificial ears?

In recent years, a rapidly increasing presence of artificial intelligence can be observed in the field of music and sound processing, especially in the form of neural networks and so-called deep learning. Google generates the voice of its assistant, Spotify evaluates millions of songs to automatically compile personalized playlists, and artificial neural networks are composing pop songs and film music.

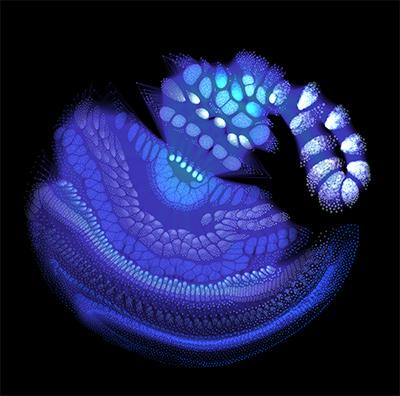

It is often difficult even for experts to understand what exactly is going on in these complex self-learning systems and how they get to these remarkable results. The project "Immersions" renders the inside of sound processing networks audible. The basic idea is essentially the following: one starts with some sound snippet (e.g. a drum loop). This snippet is modified by an optimization procedure in such a way that it activates a certain area of the neural network. The modified clip can then be used as the basis of a new optimization with another region in the net as a target. This produces sounds that the neural network generates in a freely associative manner. At the same time, the processes in the network are visualized so that one can watch the system while it is processing information.

One of the most important properties of neural networks is that they process information in different degrees of abstraction. Accordingly, activation in a certain area of the network can be associated, for example, with simple short tones or noises, while in another area it can be associated with more complex musical phenomena such as phrase, rhythm or key. What exactly a neural network "listens to" depends both on the data based on which it has learned and on the task for which it is specialized. This means that music sounds different for different networks.

To allow all this to be experienced directly, I have developed a setup that allows the generation and control of sounds in real-time. The material for the live performance is not songs or pre-programmed loops, but hallucinations of neural networks.

Independent study project.

Supported by Prof. Dr. Christoph Seibert.

Alexander Lunt

MODI

for gestural Interface and Performer

MODI is the artistic result of Alexander Lunts' master’s thesis, premiered in November 2018. The piece features different modes of contactless interaction between human and machine. In some modes the body becomes an instrument and in others a controller. The main idea is the sonification of the performer's hand movements. The setup is realized with marker-based highspeed motion capture and Max.

Developed as part of the master's thesis by Alexander Lunt.

Supervised by Prof. Dr. Marc Bangert and Prof. Dr. Thomas A. Troge.

The project was presented at 8th Conference on Computation, Communication, Aesthetics & X (xCoAx 2020).

Jia Liu

Ring Study II/b

Live Performance with an Autonomous Pitch-Following Feedback System

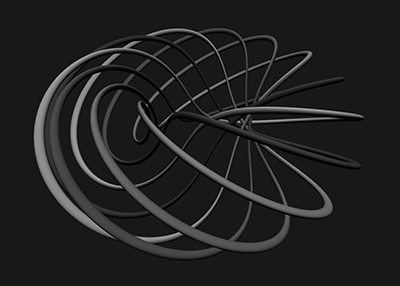

Ring Study II/b is a live coding performance for 3D loudspeaker setups realized in SuperCollider. It consists of up to 64 discrete audio channels, each playing the output of a sine-oscillator which follows the frequency from another channel or itself. Using a Knot-theory and Graph-theory inspired approach, connections and reconnections between channels are performed to build up different knots, chains or rings closing up one or multiple feedback loops, creating their own characteristic behaviors projected into the human listening space.

The performance is a real-time interaction with the system, mainly by controlling the topologies of the signal-flow with following-structures, the definition of following-rules and the modulation of the signal (frequency deviations, frequency modulation) through constant observation and auditory feedback of the system’s behavior. The idea of an autonomous pitch-following feedback system is derived from its twin-work Ring Study II/a — an “analog” version for singers arranged in a circle and following each other’s pitches. Both pieces explore in their own way how deviations and inaccuracies of each individual agent influence the overall effect, spatial performance and development in time.

Developed in the context of the course Kreative Programmieren 3.

Supervised by Patrick Borgeat and Prof. Dr. Christoph Seibert.

The project was presented at International Computer Music Conference 2019 (ICMC-NYCEMF 2019) in New York City.

Michele Samarotto, Daniel Kurosch Höpfner

Collaborative audio-visual Live Coding

A Graphical Approach to Dynamic Wave Terrain Synthesis through Shader-Programming

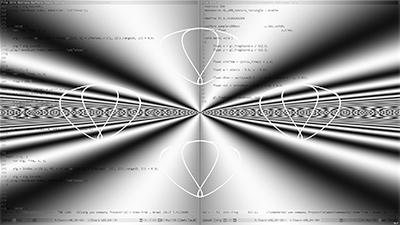

The practice of live coding has established itself as a relevant approach for creating and performing computer music, emphasizing the possibilities and challenges of improvisation in computer-based music performance. In most collaborative live coding scenarios individual actors are acting as autonomous instrumentalists. In search of different collaborative performance modalities, we explore particular kinds of multi-modal interdependencies in the context of an audio-visual duo-performance by individually live coding two constituent parts of a sound synthesis model: dynamic wave terrain synthesis.

Given its reliance on two independently controllable processes (terrain and trajectory generation) with complex interdependencies, dynamic wave terrain synthesis is particularly interesting for exploring new modes of collaborative interaction in computer-based music performance by delegating the control of these processes to individual performers. Through a strong association to visual representations of the synthesis process, dynamic wave terrain synthesis gives the possibility to use graphical approaches for the control of the synthesis model, creating an inherent coupling between sound and visuals and facilitating ways to conceptualize sound visually. This led us to the conception of a performance framework for an audiovisual live coding performance based on the visualization of terrain and trajectories and to the experimentation with computer graphics techniques, harnessing shaders for terrain generation and transformation. In doing so, we derive both sound and visuals from the same underlying algorithms, introducing the possibility of using extra-musical concepts for sound transformation.

Independent study project.

Supervised by Patrick Borgeat and Prof. Dr. Christoph Seibert.